This article explains on how to recover from a trail file corruption from Oracle GoldenGate 12cR2 (12.2) version. Prior to OGG 12.2, recovery from a trail file corruption has so many steps which is very tedious and if any one mistake in the step would lead to data integrity issues and replication would be messed up. Below are the steps to recover the trail file corruption prior to OGG 12.2 (OGG 11g, 12cR1)

Note down the Last Applied SCN and the Timestamp from the target. In the Source, scan the trail file for this Last Applied SCN. Re-Extract the data after this SCN by altering the Pump Extract process. Alter the Replicat process to read from the New Trail Seq#. Start the Pump and Replicat process.

From Oracle GoldenGate 12.2, a new feature is introduced called “Automated Remote Trail File Recovery”. In this article, I am going to explain with an example on how simple it is to recover from a trail file corruption from OGG 12.2

Server and configuration details are below.

SOURCE

Hostname - OGGR2-1.localdomain Database - GGDB1 Schema - SOURCE Table Name - T1 OGG - /vol3/ogg Extract - EXT1 Pump - PMP1

TARGET

Hostname - OGGR2-2.localdomain Database - GGDB2 Schema - TARGET Table Name - T1 OGG - /vol3/ogg Replicat - REP1

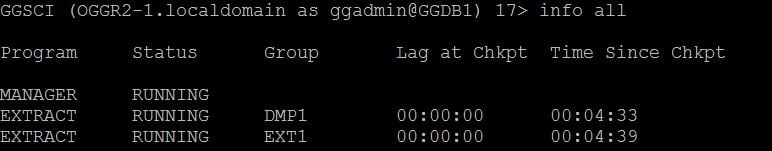

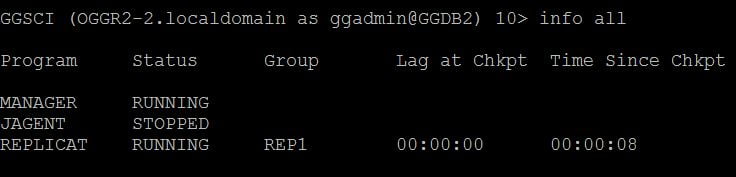

From the below output, you could see that all the oracle goldengate processes are running fine without any issues.

The parameters of the oracle goldengate processes are below.,

EXTRACT EXT1

USERID ggadmin, PASSWORD oracle

EXTTRAIL /vol3/ogg/dirdat/et

TABLE source.t1;

EXTRACT DMP1

RMTHOST OGGR2-2, MGRPORT 7879

RMTTRAIL /vol3/ogg/dirdat/ft

TABLE source.t1;

REPLICAT REP1

USERID ggadmin, PASSWORD oracle

MAP source.t1, TARGET target.t1;

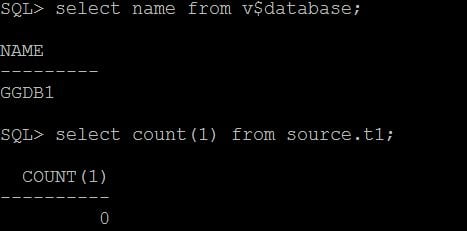

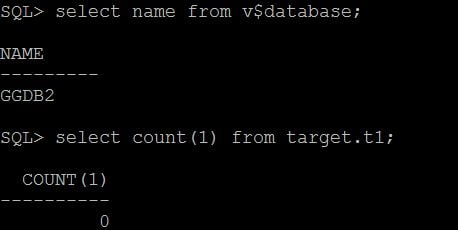

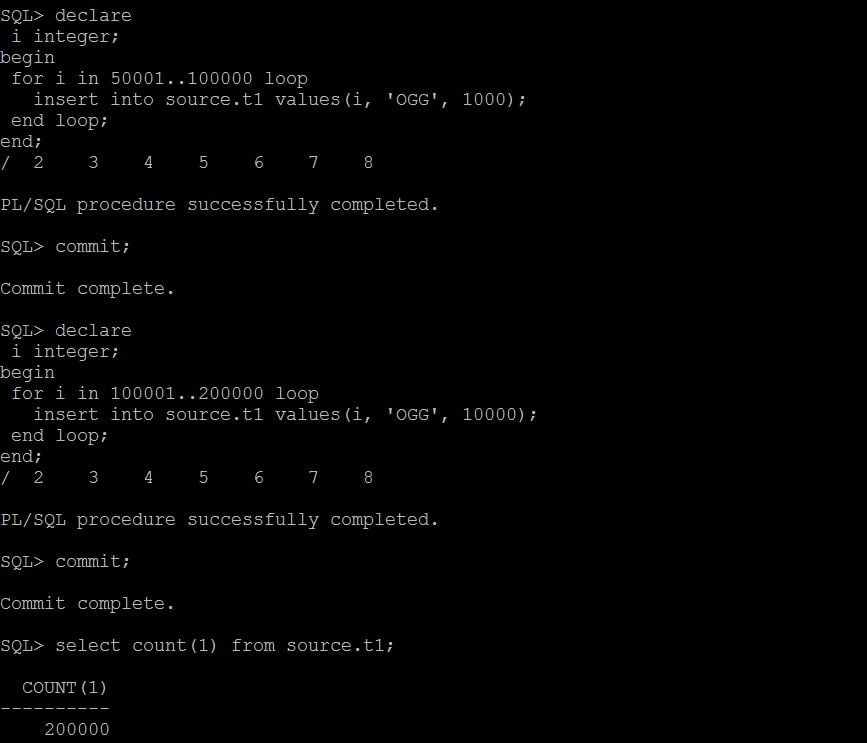

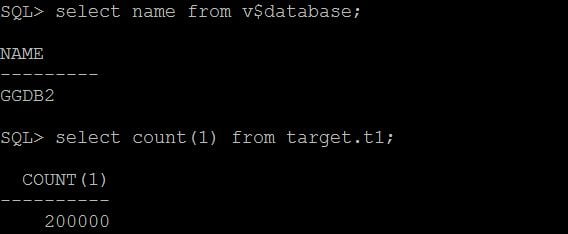

Check the count of the table T1 in source and target.

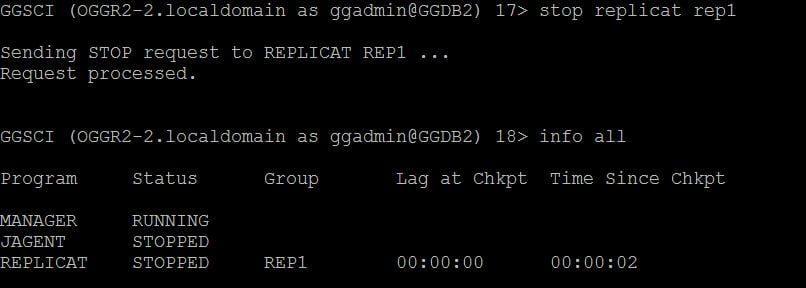

Now I am going to stop the replicat process in the Target side so that I could corrupt one of the trail files which is going to generate in the target server.

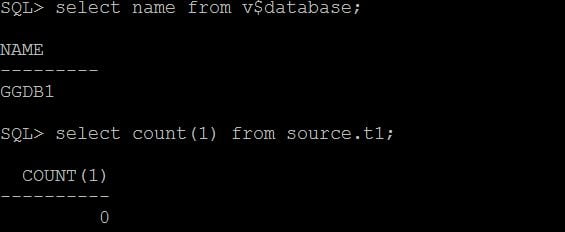

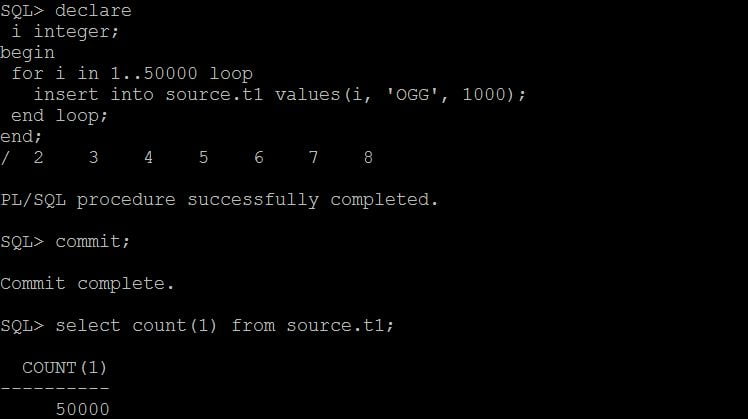

Insert some records to the source table SOURCE.T1

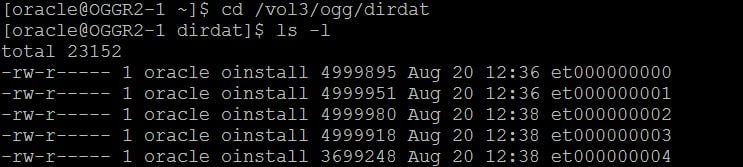

In the source below trail files are generated.,

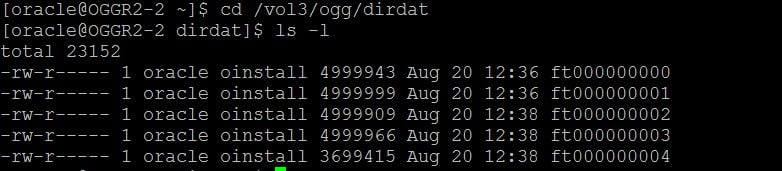

All these data are pushed / pumped to the target server by the Pump process.

In the target server, I have corrupted the trail file ft000000002. Now I am going to start the replicat process REP1 in the target side and it abends with the error “Incompatilbe Error”. Let us see it.

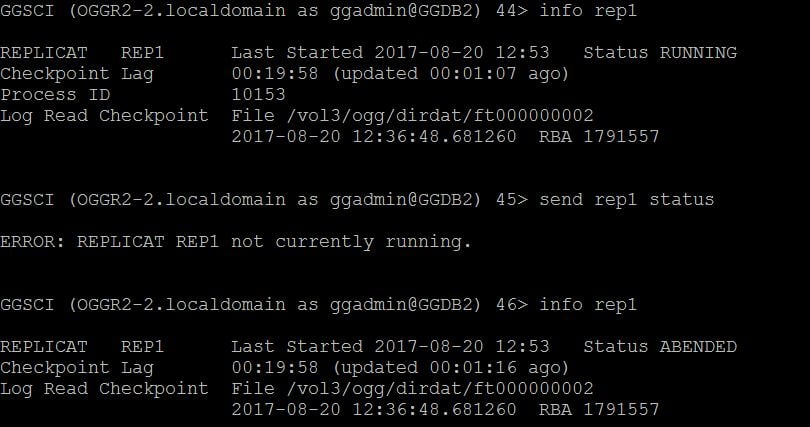

The replicat process got abended.

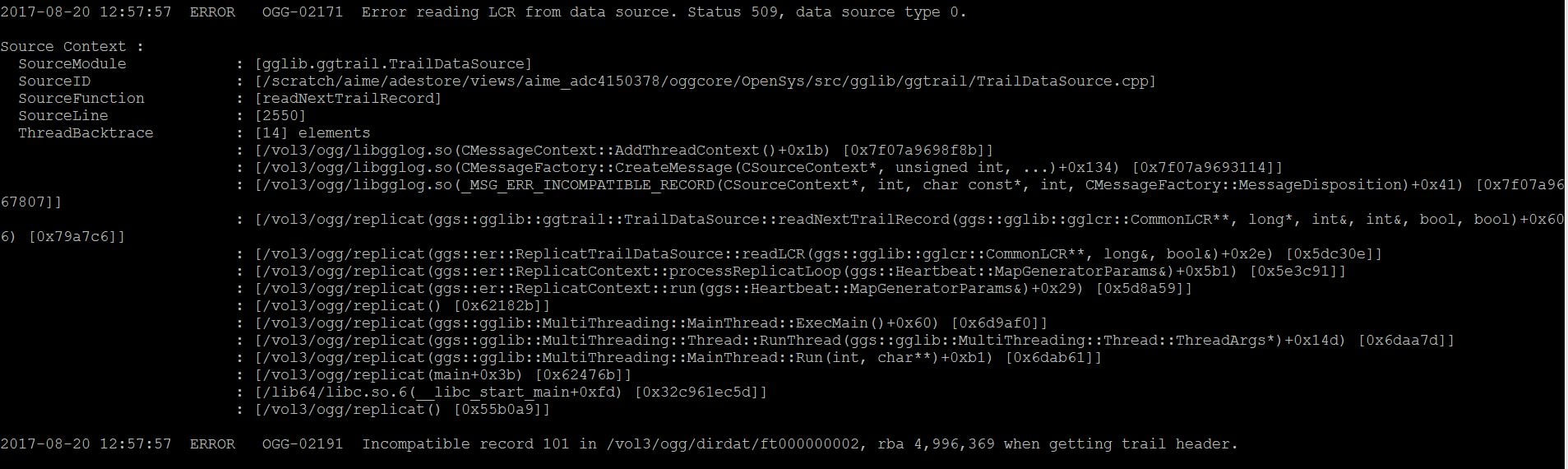

In the report file below was the error.

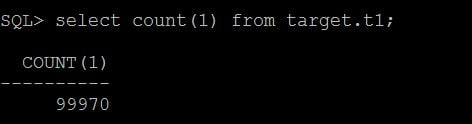

So the trail file ft000000002 has a corrupted record in the RBA 4996369. Also only few records got replicated to the target table TARGET.T1

The replicat process will not move further since there is a bad record in the trail file. Let’s implement or use the new feature “Automated Remote Trail File Recovery” which is available in the OGG 12.2. Below are the steps,

1. The first and foremost thing is to stop the Pump process in the source side. 2. Delete all the trail files first from the corrupted seq#. 3. Start the Pump process in the source and any missing trails are now automatically rebuilt by bouncing the Extract Pump. 4. Start the Replicat process.

Below are the detailed steps,

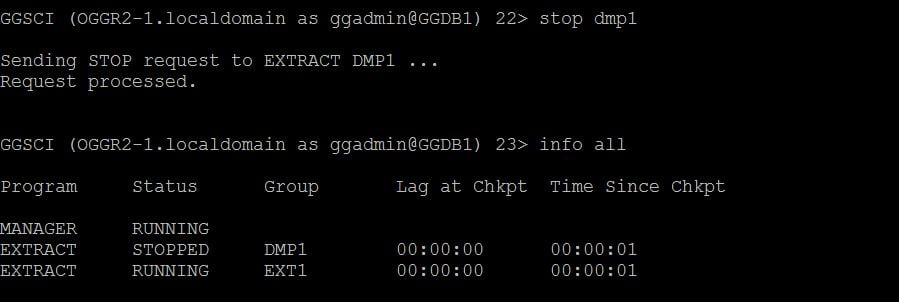

1. Stop the Extract pump (Datapump) process in the source.

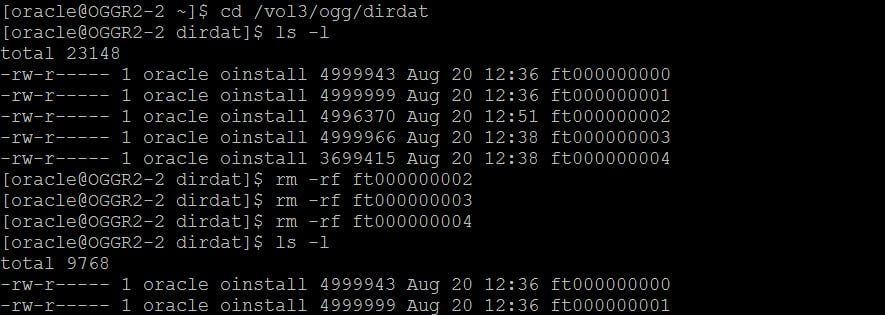

2. Delete all the trail files first from the corrupted seq# ft000000002

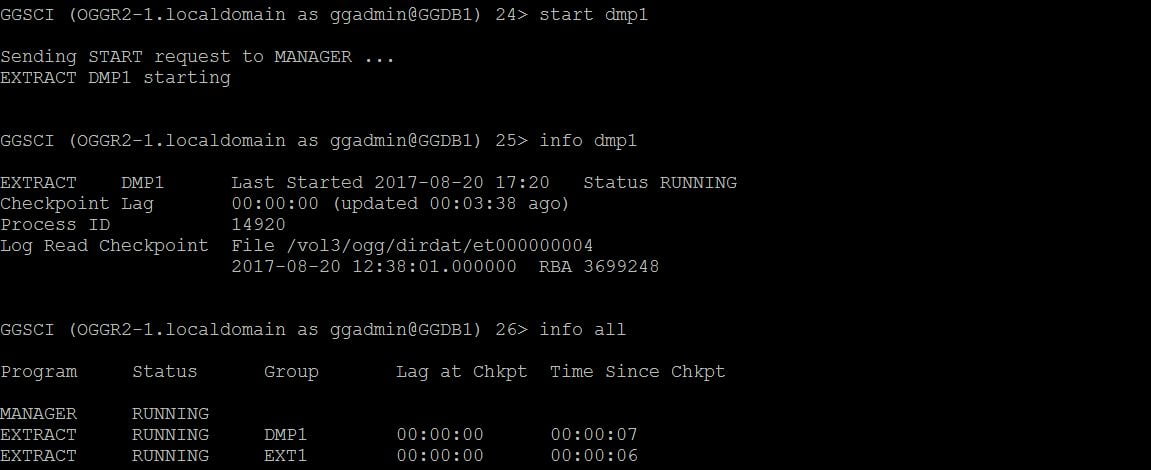

3. Start the Pump process in the source and any missing trails are now automatically rebuilt by bouncing the Extract Pump.

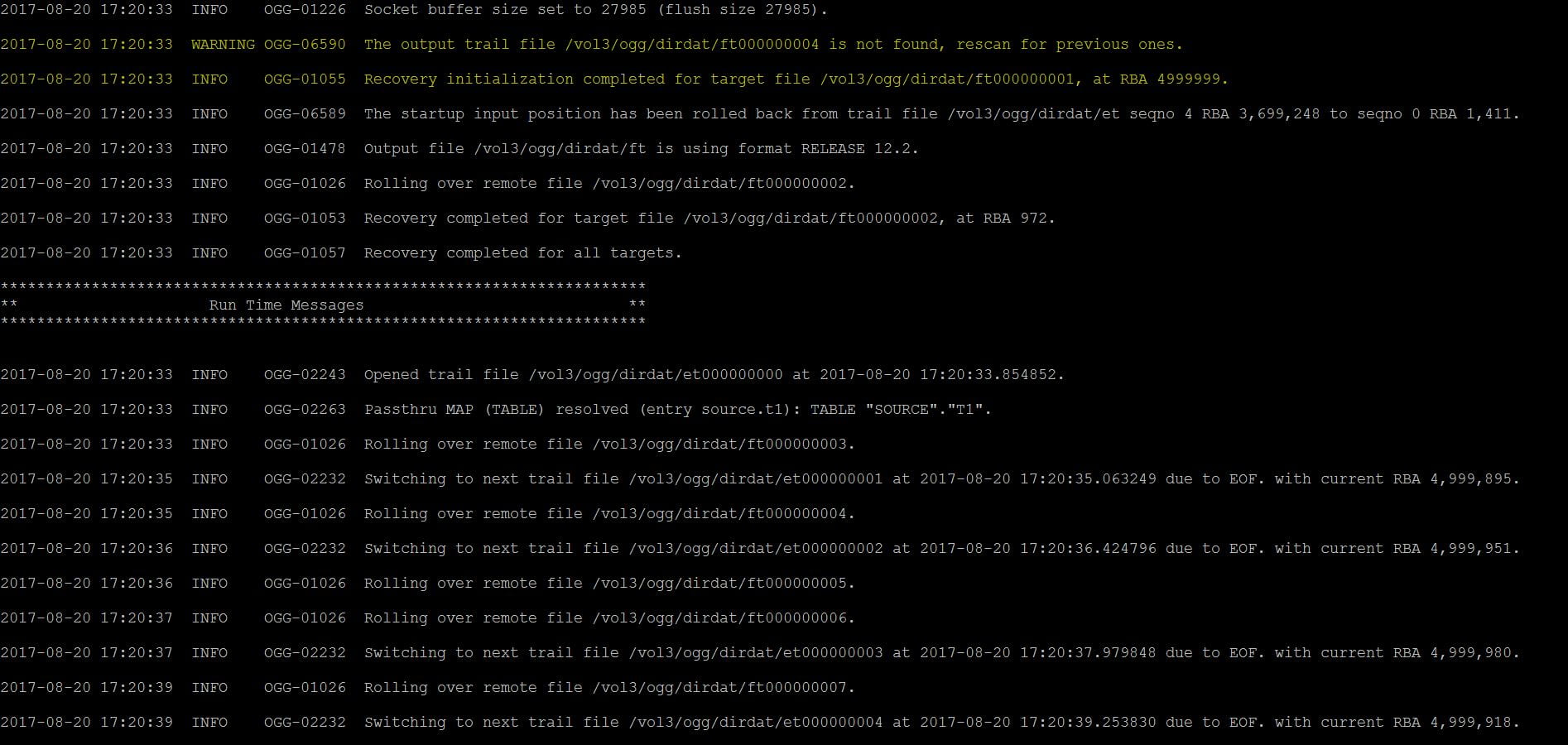

Report file of the Datapump process DMP1

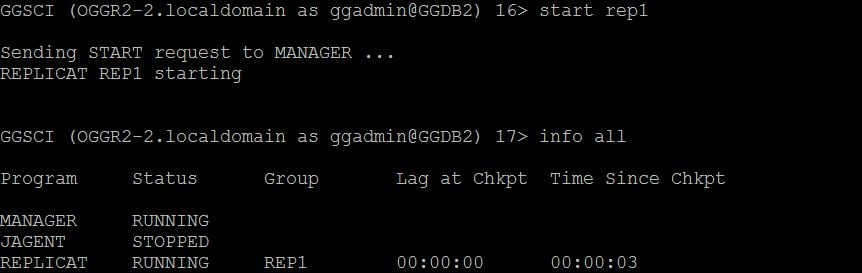

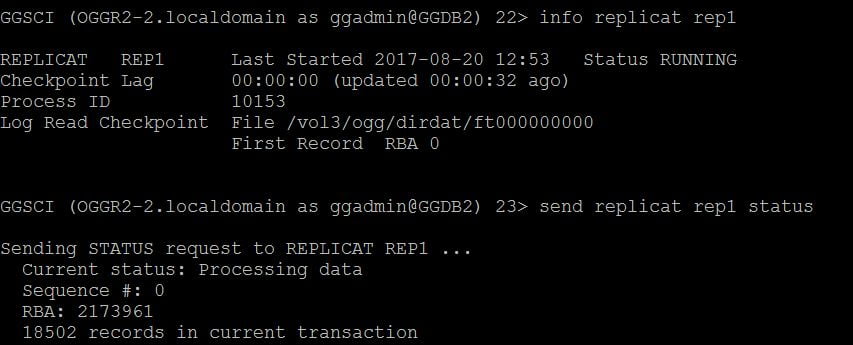

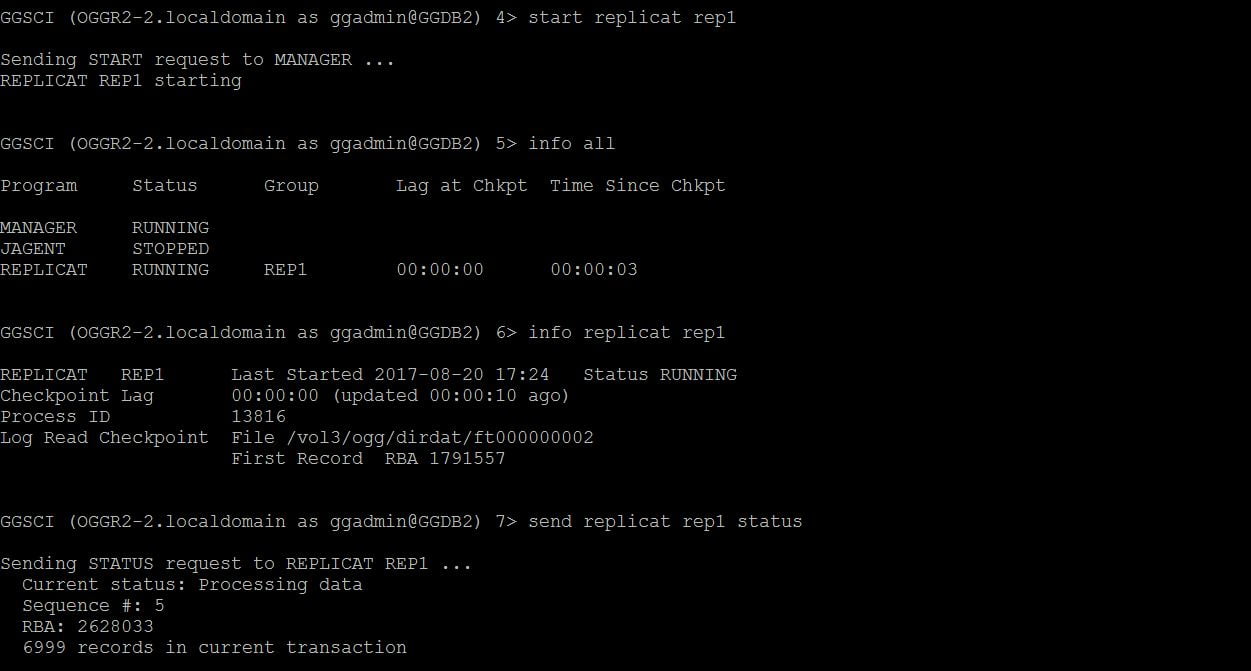

4. Finally start the replicat process REP1.

You could see the replicat is running fine and the records are applied on the target table TARGET.T1.

How simple it is to recover from a corrupted trail file at the target. If you compare the steps involved in the versions prior to OGG 12.2 and steps involved from OGG 12.2. It is very simplified and easy. But there are some considerations or requirements which are needed to use this new feature Automated Remote Trail File Recovery.

1. At least one valid complete trail file should be present prior to the corrupted trail file. 2. Respective trail files in the source should be there. 3. Should not use NOFILTERDUPTRANSACTIONS.

You may think about the last point, NOFILTERDUPTRANSACTIONS. What if the replicat process after the restart reads and applies the records which are already applied in the target? You will end up with errors like “ORA-00010 Unique constraint”. To overcome this, a new parameter FILTERDUPTRANSACTIONS has been introduced from OGG 12c.

FILTERDUPTRANSACTIONS | NOFILTERDUPTRANSACTIONS

a)It causes replicat to ignore transactions that it has already processed.

b) Use when Extract was repositioned to a new start point (ATCSN or AFTERCSN option of “START EXTRACT”) and you are confident that there are duplicate transactions in the trail that could cause Replicat to abend.

c) This option requires the use of a checkpoint table.

d) If the database is Oracle, this option is valid only for Replicat in nonintegrated mode.

e) The default is FILTERDUPTRANSACTIONS

f) To override this, use NOFILTERDUPTRANSACTIONS

Hope you found this article useful.

Cheers :-)

Total Users : 1898138

Total Users : 1898138

very nice explaining

Thank you Amar.

hi Veeratteshwaran ,

by stopping a replicat does it corrupt the trail file ?

No

Hi Mahesh,

The Major reasons for the Trail file corruption are as below,

1) Extract is writing to the trail, and a portion around the begin and end of a record became corrupt. In this case, GoldenGate is missing a portion of the data.

2) Two Extracts from different nodes are accidentally configured to write to the same trail on a remote system. Both Extracts will be writing data over each other.

Replicat may be processing what looks like good data from one Extract, but eventually the second Extract will write data over the record that Replicat is

currently processing.

3) A similar situation can occur when Extract is writing to a trail that existed for a previous Extract group. When the new Extract starts to write the trail,

it starts writing at trail sequence 0, RBA 0. Replicat will process fine until it reaches the old data.

4) Network Failure between the Source and the Target.

5) Incorrect dismount and mount of the file systems where the Trail files resides.

~Veera

Hi, Veeratteshwaran

How did you destroy the remote trail file?

I used vim command to delete some lines of a trail file, then start the replicat process, but I didn’t get any errors from ggserr.log, my golden gate version is 12.3.

Hi ,

I did the same thing to corrupt the trail file. Opened a Trail file with vi and removed the lines from it. The trail file got corrupted.

~Veera

Hi Veera,

Very well explained , i would like to know the scenario what if the trail file is deleted accidentally.

should we follow the same procedure.

Regards,

Harish

Hi Harish,

Two cases in here.

1. Target Trail file deleted / corrupted

2. Source Trail file deleted / corrupted

For Case-1, we can follow automated remote trail file recovery, if the pre-requisites are met.

For Case-2, we need to check the LOGBSN value of the Target replicat process and then re-extract the data from the source database provided that the required archive logs are available.

Regards,

Veera

When exttrail on source side get deleted/rename without altering extract process how old trail get generated, as Extract is already pass that extseq#.